In this web scraping tutorial, you are going to see the detail of what, why, how, and where you can apply the web scraping and their examples in your study and business.

In 2020 there is a huge amount of businesses are shifted offline to the online market after that there is a massive amount of sales and purchasing, e-commerce, and advertising and social media data is stated generating rapidly.

Every business needs business insights, customer patterns, and each and every transaction activity to increase profit.

Everyday customer and product information analysis is very crucial for making a valid decision for business growth.

However, for analyzing the data you need the correct and structured form of data first before analysis.

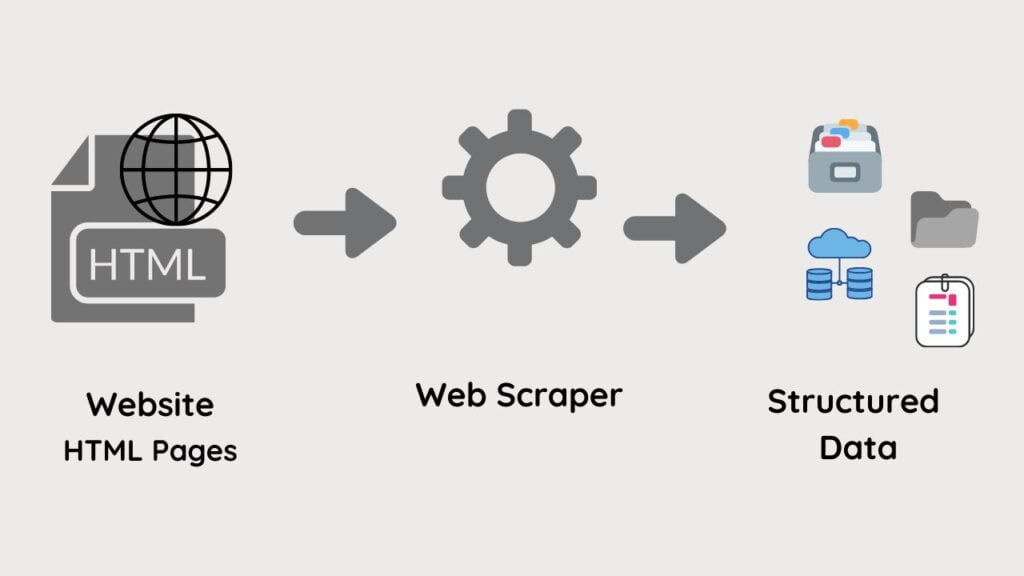

The process of collecting and storing data in a data source is called scraping and you do mostly that on the web so it is web scraping.

The meaning of web scraping is very simple, getting the data from the web using automated tools or programming languages called web scraping.

Similarly in this process, different web scraping projects, tools, or software can involve getting automated and accurate data.

What is Web Scraping?

It is an automated or manual Data Acquisition process for distinct data platforms that includes webpages, PDF, HTML, or XML tags, and many more.

It is the popular and rapidly growing data extraction process for the online page and there’s a really crucial reason behind it.

The more data you’ve got the more information will get for better decision making and this is a major factor for scraping.

It can use different scraping tools or programming libraries to drive a huge amount of information.

Software users create and automate the processes by applying a bot or web crawler to drive a large amount of information.

Organizations crucially follow data analytics to get lead and financial growth from an enormous amount of data collected from sources.

Why We do Web Scraping?

It is the data extraction process where we accumulate and store information in several data origins.

E-commerce companies aggregate data like product names, prices, and specifications to generate a lead, price comparison, and business decisions.

The healthcare companies often scrape data for doctors, health care specialists, Institutions, meetings, Journals, Diseases, clinical trial information, etc.

How We do Web Scraping?

Modern database systems originate with a graphical application environment that enables users to query and explore data from the database.

Data exist in conventional files like text files and Excel spreadsheets, PDF, Documents, and multimedia like Images, Videos, Audio, etc.

Scripting languages are Commonly employed to obtain data from several files and data sources.

Modern scripting languages like Python, JavaScript, PHP, Perl, R, Octa, and MATLAB are largely used and are well suitable for data collection processes.

The general-purpose or specialized programming languages apply particular functions to perform data extraction very simply.

Websites data define in web programming like XML and JSON to define the contents of every single webpage.

An increasingly popular method to get data from websites using scraping tools makes data gathering easy using XML and JSON.

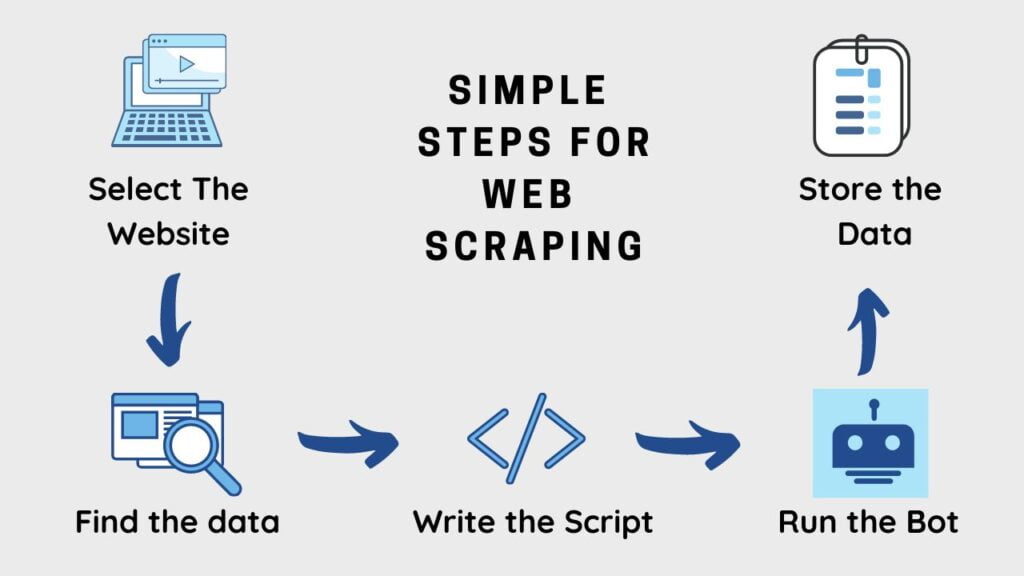

What are the Steps in Web Scraping?

- Select the right URL or website that you desire to scrape

- Find and research the data you want to extract

- Inspecting the HTML web pages and understanding the data tags for scraping

- Write the script or scraping code

- Run the Bot or code to extract the data

- Save and store the extracted data in a correct format

Where the Web Scraping Used?

It is widely used for the banking or finance sector and stock market data analysis to understand the money and market trend.

The market analysis contains estimating company Fundamentals, Public Sentiment Integrations, News Monitoring, Investment Decision Making, etc

The retail industry do data scraping for Market Pricing and Revenue Optimization, Market Trend Analysis, Investment Decision Making, Competitor Monitoring, etc

In the real estate sector scraping data to Understand Market Direction, Appraising Property Value, Competitor Monitoring, etc.

A big amount of data has been collected in multiple sectors like healthcare, News, Sports, marketing, technology, etc.

Conclusion

It is a form of data collection or data gathering in a manual or automated way from the internet into different data formats.

Scraping data in data sources and the file system can retrieve easily for understanding and for analysis.

According to a study the modern world is crucially dependent on data analysis for decision making.

On the other hand, if you are a programmer it can be an essential skill to perform web scraping using javascript and python.

To get the data from a variety of sources with different programming languages is very easy and you can do that using a popular python tool called Beautifulsoup web scraping library.

Recommended Articles:

What Are The Simple Steps For Web Scraping?

What Are The Steps In Data Analysis?

Meet our Analytics Team, a dynamic group dedicated to crafting valuable content in the realms of Data Science, analytics, and AI. Comprising skilled data scientists and analysts, this team is a blend of full-time professionals and part-time contributors. Together, they synergize their expertise to deliver insightful and relevant material, aiming to enhance your understanding of the ever-evolving fields of data and analytics. Join us on a journey of discovery as we delve into the world of data-driven insights with our diverse and talented Analytics Team.